The bottom line

- Supercomputers are ultra-powerful machines that perform complex calculations at exceptional speeds, enabling discoveries and solutions to society’s toughest challenges.

- They are used to design new materials, improve medical treatments, forecast extreme weather, develop advanced cybersecurity strategies and more, benefiting lives in many ways.

- NSF supports the development and provides access to state-of-the-art supercomputer hardware, software and infrastructure, driving transformative discoveries and innovation.

What is a supercomputer?

The term “supercomputer” typically refers to the world’s fastest and most powerful class of computers at any given time. Supercomputers process vast and complex data by harnessing the combined power of several interconnected computer systems working simultaneously. Supercomputers are primarily used for the most computationally demanding scientific and engineering work.

With decades of technological advances, today’s supercomputers are magnitudes faster than those introduced in the 1960s. The earliest supercomputer performed only 3 million calculations per second, putting it light-years behind modern smartphones. Today, some supercomputers can perform quintillions of calculations per second — over a million times faster than a smartphone.

Why are supercomputers important?

All fields of science and engineering, as well as medicine and social sciences, increasingly depend on supercomputers to yield transformative breakthroughs. By efficiently performing complex analyses and simulations, supercomputers solve problems that would be too time-consuming, costly or even impossible for standard computers. Examples include:

- Simulating drug interactions and efficacy, thereby accelerating the development of lifesaving treatments and cures for disease.

- Modeling the behavior of large and complex molecules to develop stronger, more durable or lighter materials that enhance performance, safety and efficiency across a range of applications.

- Predicting the strength and future path of hurricanes more accurately and quickly, allowing for better preparation that saves and minimizes economic losses.

- Analyzing plant genetics and biology to develop higher-yielding, more-resilient crops.

- Optimizing manufacturing processes to boost performance, improve productivity and decrease costs.

- Enabling the most precise measurements yet of the universe’s expansion and helping scientists determine how the first stars in the universe formed.

What opportunities remain?

As data-intensive applications continue to grow, particularly those powered by artificial intelligence, the demand for supercomputing resources is rising rapidly. At the same time, these workloads consume enormous amounts of energy, creating new challenges for power availability and long-term scalability.

These constraints are prompting researchers to explore new approaches for advanced computing. One promising direction is neuromorphic computing. Unlike conventional computing, which requires time and energy-intensive data transfer between separate processing and memory units, neuromorphic computing mimics the brain’s efficient network of neurons by processing and storing information as an integrated part of the same operation. In parallel, quantum computing — though still experimental — offers the potential to perform complex calculations much faster than today’s supercomputers.

To realize the full potential of advanced computing and its real-world benefits, the U.S. must also invest in building a skilled workforce. This requires expanding opportunities for hands-on training, including access to leading experts and world-class facilities, that empower new researchers to use advanced computing tools for discovery and innovation. NSF is already leading such efforts through initiatives such as NSF Training-based Workforce Development for Advanced Cyberinfrastructure and NSF Experiential Learning for Emerging and Novel Technologies, which focus on training and growing the national workforce in advanced cyberinfrastructure and emerging technologies.

NSF investments in supercomputers

Laying the groundwork

The NSF Supercomputer Centers Program, in operation from 1985 to 1999, established the U.S. as a world leader in computational science. These centers expanded access to state-of-the-art supercomputer resources from only two universities in the early 1980s to over 200 universities by the early 1990s. Some key successes of the program were:

- The 1986 creation of NSFNET, a network that connected researchers to the supercomputer centers. NSFNET served as the internet’s backbone until its commercialization in the mid-1990s and led directly to today’s internet.

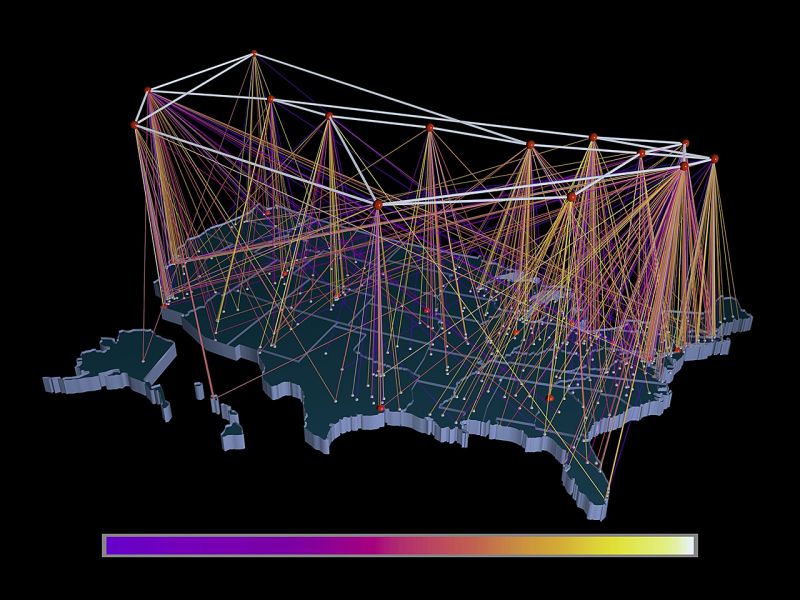

- Groundbreaking visualization tools that improved the interpretation of large data sets, such as tracking thunderstorms as they develop.

- Faster, more accurate simulations of molecules that enabled the development of the first asthma drug.

- The development of Mosaic, the world’s first freely available web browser, which allowed web pages to include both graphics and text. Mosaic grew the web community exponentially and led to the development of modern browsers.

- Advanced models that allowed engineers and industry partners to design better consumer products, such as leakproof diapers, to improve industrial processes, such as metal forming, and to improve traffic safety.

Taking supercomputers into the future

Through programs like NSF Advanced Cyberinfrastructure Coordination Ecosystem: Services & Support, NSF continues to provide researchers nationwide with supercomputing resources, driving discovery and innovation. For example, researchers simulated brain activity in Parkinson’s Disease, uncovering details that could enable more targeted treatments. Another group developed simulations to design materials that improve chemical recovery and purification in various industries.

In November 2025, NSF began installing Horizon, the nation’s fastest academic supercomputer. Horizon’s simulation speed will provide unprecedented computing and AI capabilities. NSF-supported researchers are also breaking ground on next-generation computing technologies, from building a prototype neuromorphic computer to beginning construction of a quantum computer accessible to researchers nationwide.

NSF’s ongoing investments are expanding the limits of computing, driving discoveries, enhancing prosperity and bolstering national security.

Delve deeper: Learn more about NSF’s history of investments in supercomputers.