What is co-design? It is what you get to have to do if you wish to really find the optimal way to build a system that works by the interplay of different layers – in the case of physics experiments these can be safely identified as “hardware” and “software’, although one could even zoom in further into geometry, materials, data collection and reduction strategies, pattern recognition, inference extraction and so on.

Considering hardware and software as the two domains that make up an experiment, it should be obvious (but it has not been recognized as such until lately) that doing your best to optimize the hardware of the system irrespective of your software choices will incur in a sub-optimality later on when you find out that the choices you made force you in a corner of phase space in what software tools you can use to perform the inference extraction procedures at the basis of the final measurement. In other words, if there is a coupling (e.g. due to correlations, constraints, budget ceilings, etcetera) between the two sub-domains, then you really need to consider them together when you search for the working point of your apparatus. A serial optimization of hardware and then software will instead produce a misalignment of the two, and a resulting suboptimality.

The paper includes a discussion of two important subsystems for HEP – tracking and calorimetry – as well as of use cases in gamma-ray astronomy, ultra-high energy neutrino detectors, muon tomography, neutron detection, and gravitational wave detection. Some of these are worked out in full and not only demonstrate where the mentioned sub-optimality arises but go all the way to try and quantify it in specific examples.

I contributed in this sense with the analysis of the SWGO gamma-ray detector array, where I considered the simultaneous optimization of the position on the ground of hundreds of Cherenkov detectors and the triggering strategies, the detailed unit performances, and a detailed cost model that included land and fencing cost, cabling, and counting rooms. The resulting strongly coupled system can be optimized serially, or holistically by a hybrid gradient descent plus reinforcement learning strategy.

Utility for a complex scientific experiment is seldom easy to define, but in this case a good proxy is the inverse of the time required for a 5-sigma discovery of a point source of gamma rays in the sky. This can be formulated in a way that factors in the energy and pointing resolution, along with the effective area of the array (which is proportional to the flux of reconstructable gamma rays). An added benefit of this is that one can study the components of the utility and their interplay, in the large configuration space of the possible optimal solutions.

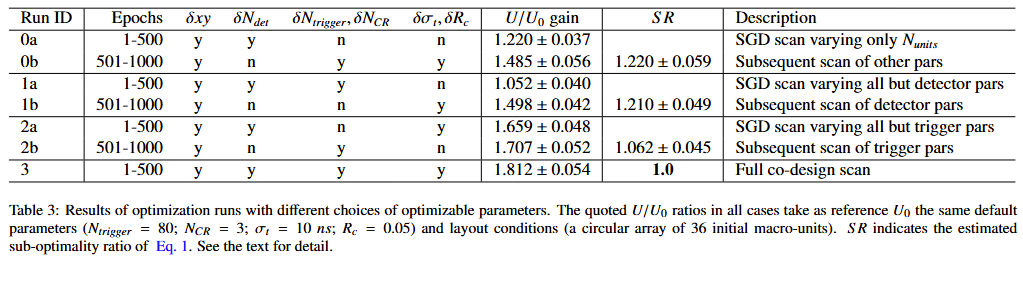

The table below shows how much one “loses” in terms of the utility function of the experiment if one applies a serial optimization strategy.

Runs 0,1,2 are divided in two parts, when only a subset of the parameters are scanned for optimal solutions in each of the two steps. Run 3 is the holistic one where everything is considered at the same time. As you can see, the “suboptimality ratio”, defined as how much larger is the utility of the holistic run with respect to serial strategies, is 6 to 20% larger than unity, indicating the advantage of co-design.

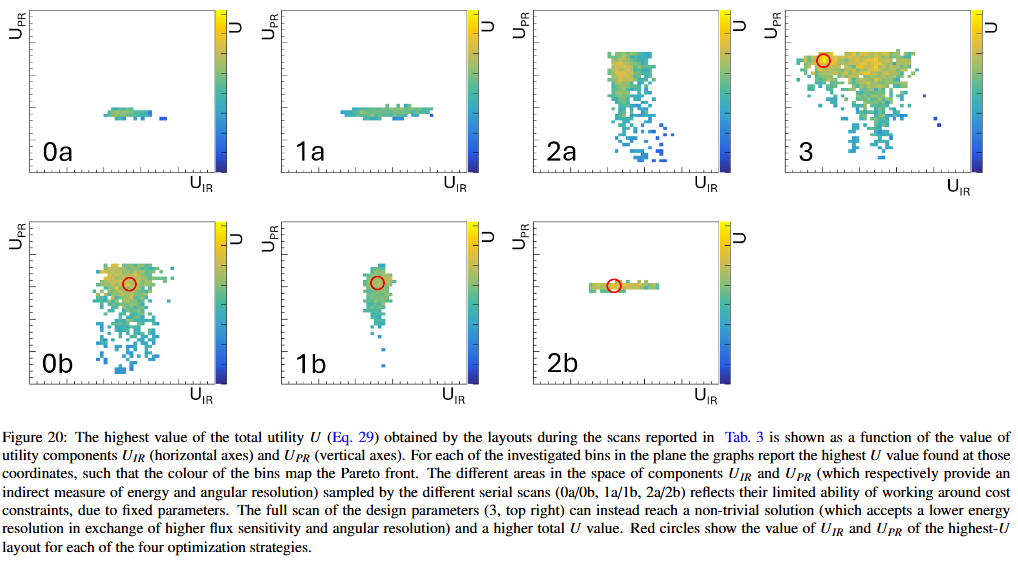

Of interest is to examine the Pareto front sampled by the serial optimization approaches and compare it to the range probed by the simultaneous optimization of all parameters. One discovers that the serial strategies fail to consider configurations where energy resolution is sacrificed (by lowering the triggering threshold and admitting more independent counting stations) for the sake of a higher pointing resolution (see red cirlce in the top right graph).

Overall, the article poses itself as a snapshot of the complexity of modern-day scientific experiments in fundamental physics, and an examination of the techniques that can be used to tackle it. The industrial use cases provided in Section 4 show how similar issues are handled in settings where the target is not a measurement of some physical quantity of interest, but rather the performance of market products or industrial procedures.

I will have much more to say about different studies contained in this article (it is 90 pages long!) in the near future, so I figure this should suffice as a sneak peek into its contents. To be continued!