Dave Chappelle told the AP recently:

One of the worst things that can happen to a comedian is becoming successful before they get good. Because you miss the part where you get to explore and make mistakes.

A new paper in Nature Reviews Neuroscience by Lisa Feldman Barrett and Earl K. Miller explains why. Before you process sensory input, your brain has already constructed a category based on prior experience, current needs, and a predicted action plan. Incoming signals get compressed into that prediction. The brain doesn’t receive evidence and then decide. It decides and then receives evidence.

The architecture is lopsided. As much as 90% of synapses in the visual cortex carry feedback signals from memory, not feedforward signals from the senses. Beta frequency waves carrying goals and plans constrain gamma waves carrying sensory specifics. The system is built to confirm, not to discover.

Barrett puts it plainly:

The stimulus, cognition, response model of the brain is wrong. The brain prepares for a response and then perceives a stimulus. A brain is not reactive. It’s predictive. Action planning comes first. Perception comes second, as a function of the action plan.

None of this is new.

Linguistic anthropology has been saying it for a century. Sapir and Whorf argued that language categories shape perception before evidence arrives. Boas documented how culturally constructed categories determine what counts as data. The entire tradition of cultural relativism rests on the observation that humans don’t perceive first and categorize second. Our 419 scam research showed the same mechanism at the social level: the mark’s categorical predictions, trust, greed, opportunity, suppress disconfirming signals in the data until the money is gone.

What Barrett and Miller add is synapses and beta waves. They’ve given neuroscience a mechanism for something fieldwork established generations ago.

Everything does this.

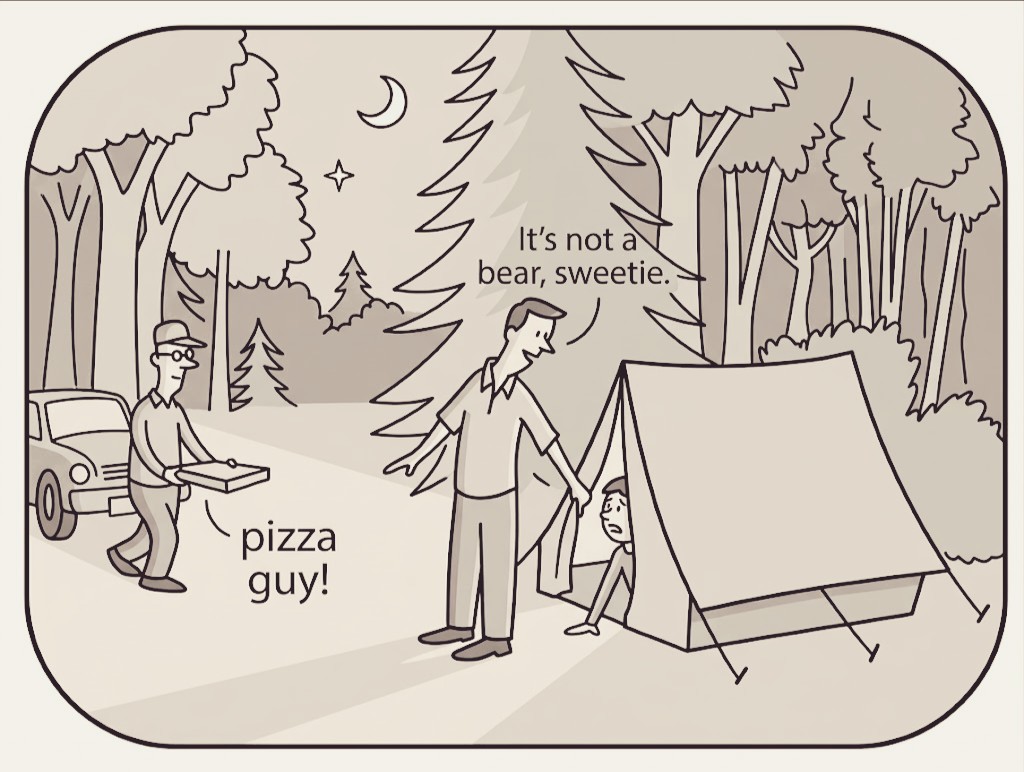

Special operations and intelligence work are supposed to be the disciplines where categorical calibration matters most. A Delta Force commander named Pete Blaber formulated a principle he called “Don’t Get Treed by a Chihuahua”: don’t impose the wrong threat category on incoming data and take extreme self-limiting action based on a misidentification. That’s Barrett and Miller’s model stated as tactical doctrine. The operator who categorizes every sound in the dark as “bear” will exhaust himself climbing trees. The one who categorizes every sound as “nothing” will get killed. Calibration is survival.

But Blaber’s own cultural priors were so uncalibrated he believed Cat Stevens was the most famous celebrity convert to Islam, while obviously Muhammad Ali towered directly above him in the data. His feedback architecture on Islamic culture had never been tested by prediction error, so it never updated. A special operations commander with access to the best tools on the planet, wandering along a flat line of cultural ignorance about Islam while giant mountains of evidence stood right above him, unexplored.

Intelligence operations face the same structural problem. An analyst arrives at a data stream with a category already constructed: “threat,” “insurgent,” “enemy combatant.” Incoming signals get compressed into that prediction. Disconfirming evidence, the villager who is just a villager, the communication that is just a communication, gets suppressed by the 90/10 feedback-to-feedforward ratio. The category shapes the evidence, not the other way around.

Barrett and Miller describe this as efficient allostasis. In institutional form, it is something else.

ICE executes an innocent American in Minneapolis, and a Silicon Valley billionaire announces that “no law enforcement has shot an innocent person.” The shooting creates the guilt that justifies the shooting. There is no possible prediction error because the category “innocent dead person” has been defined as inherently empty. A military command designates targets before ground truth arrives. Palantir automates the whole broken process, speeding up disaster.

The paper identifies two pathological modes.

- Depression: overly broad threat categorization imposed on situations that don’t require it.

- Autism: inadequate compression, treating every input as novel, failing to generalize. Both are failures of categorical calibration.

The first produces false positives that destroy lives. The second produces paralysis. But Barrett and Miller don’t address a third failure mode, the one that matters most for power.

Learning, in their model, happens through prediction error. When your categorical predictions fail, surprise gets integrated and the system updates. That’s the reset mechanism. But the 90/10 feedback-to-feedforward ratio means the architecture actively suppresses disconfirming signals. You need sustained, consequential prediction failure to force categorical restructuring.

Power (control) such as wealth eliminates prediction error.

If your categories are being set up to never get tested against reality, they never update. You can construct an environment where your priors are confirmed by every input, because you select the inputs. You hire people who compress information into your existing categories. You fund institutions that broadcast your predictions back to you as findings. You build companies that do this at scale.

I’ve written before about how Peter Thiel’s father Klaus strategically relocated his family through a series of Nazi-sympathetic enclaves, from Germany to Swakopmund to Reagan’s California, each time fleeing the prospect of democratic accountability. The categorical priors installed in that childhood, racial hierarchy as natural order, extraction as economic soundness, authoritarianism as operational efficiency, are exactly what Barrett and Miller’s model predicts would become permanent architecture. And the result is what I described years ago as false-paranoia fundamental to Nazism: someone who perceives existential threats everywhere, monsters under the bed that do not actually exist. Barrett would call that pathological overgeneralization of the threat category.

Thiel built an empire on it.

Palantir is, in a precise neuroscientific sense, a machine for imposing categorical predictions on incoming data and suppressing signals that don’t fit the action plan. It replicates the brain’s feedback-dominant architecture at the scale of national intelligence. In Iraq, Palantir’s “God’s Eye” nearly killed a farmer because it misidentified his hat color at dawn. Military intelligence on the ground said if you doubt Palantir, you’re probably right. But the system had no mechanism for integrating that doubt. False positives at checkpoints radicalized the communities being falsely flagged, which eventually confirmed the original threat predictions.

The categorical errors generated the evidence that validated the categories.

Blaber understood that an operator must calibrate threat categories against reality or die. Palantir removed the operator from the loop entirely, replacing calibration with automation, and the result was a self-fulfilling prophecy that created the terrorists it promised to find. That’s why I called Palantir the self-licking ISIS-cream cone.

The question Barrett and Miller raise without answering: what happens when the system is designed so that prediction errors never reach the organism?

The humanities are a trained feedback mechanism that catches categorical errors, and the CEO of Palantir is specifically campaigning to eliminate that layer. A working-class person with humanities training is Palantir’s worst customer because they can spot the prediction failures the system is designed to suppress.

The brain can reset its priors. The architecture allows it. But only if predictions fail hard enough, consistently enough, that the feedback loop can’t absorb the error. Control power is the ability to make your predictions unfalsifiable. Wealth is one such mechanism that makes inaccurate power (e.g. racism) neurologically permanent.